Why We're Smart

Most people believe humans evolved intelligence because using tools was an advantage. However, I believe tool use was secondary. Group cooperation was the primary advantage conferred by intelligence. You see, cooperation is fundamentally difficult.

This insight coalesced when I was reading about Mark Satterthwaite, an economist at Northwestern’s Kellogg School of Management. He’s famous for two important impossibility theorems: (1) the Myerson-Satterthwaite Theorem and (2) the Gibbard-Satterthwaite Theorem.

Informally, (1) says that there is no bargaining mechanism that can guarantee a buyer and seller will trade if there are potential gains from trade, while (2) says that there is no voting mechanism for determining a single winner that can induce people to vote their true preferences. In both cases, the reason for the impossibility is that people have incentives to hide their actual values to achieve a strategic advantage.

Add these to the Prisoner’s Dilemma and Arrow’s Impossibility Theorem on the list of fundamental barriers to cooperation (Holmstrom’s Theorem is another good one; it explains why you can’t get everyone in a firm to exert maxium effort). By “fundamental”, I mean there is no general solution. So the evolutionary process cannot just discover a mechanism that guarantees cooperation when it is efficient. There will always be the opportunity for individuals to subvert the cooperative process to promote themselves, thus creating selection pressure against the cooperation mechanism.

(Note that there is a hack: make sure each individual has the same genes. This is how multicellular and hive organisms get around the problem. But the existence of cancer in the former case and the reduced genetic diversity in the latter case make them limited solutions.)

To achieve extensive cooperation in large groups, individuals need the ability to model the strategic situation, estimate the payoffs to various group members, and continuously assess what strategies other members may be playing. On top of that, there’s an arms race between deceiving and detecting deception. It’s the old, “I know that you know that I know…” schtick. The smarter you are, the further you can compute this series.

Bottom line: the impossibility theorems mean the only way to achieve cooperation is to have the machinery in place to make detailed case-by-case determinations. We’ve talked about the Dunbar Number before: the maximum size of primate groups is determined in large part by a species’ average neocortical volume. I claim you need to be smarter to process more complex strategic configurations and maintain models of more individuals’ goals.

If I’m right, there are two interesting implications. First, politics will be with us forever. No magical technology or philosophical enlightenment will eliminate it. Second, if we ever encounter intelligent aliens, they’ll have politics too. Nothing else about them may be recognizable, but they’ll have analogs of haggling over price and building political coalitions.

Simple Interventions

And now for something completely different… For 25+ years, I have suffered from a propensity toward lots of bad upper respiratory infections (URIs) and associated secondary bacterial infections. Recently, I have found two simple interventions that appear to have solved this problem and dramatically improved my quality of life.

First, the history. Ever since I can remember, at least back to high school, I have come down with more than my fair share of URIs. This source says that adults average 2-4 colds per year. I typically averaged 6-8. Moreover, my URIs seemed more severe than other people’s. This study on zinc lozenges says the average length of an untreated cold is 7.6 days. I typically averaged 10-14 days.

Even worse, I developed a lot of secondary bacterial bronchitis and sinusitis, which meant a lot of antibiotics. There were two periods, one in college and one in my late 20s, where I had 3 sinus infections per year for several years. They’d put me on inhaled steroids, which would solve the problem for the six months I was on them plus another six months, after which the sinus infections would return.

Finally, about 2.5 years ago, my father suggested I try a nasal irrigation syringe. I had tried a Neti pot previously without much luck, but the syringe seemed more usable and to generate better irrigation. After about 2 weeks (and two instances of experiencing copious amounts of amazingly neon-colored discharge), my sinuses were clear for the first time in years. I haven’t had a single sinus infection since.

Now, I still had the URIs. They weren’t as bad because I didn’t have painful sinus pressure or develop sinus infections, but they still sucked. This winter, I was on my normal trajectory of 3 colds between Halloween and New Year’s. Then I went for my physical in January and my doctor said my serum vitamin D was very low: 17 ng/ml when the recommended range is 30-100. So I started taking 1,000 IU of D-3 twice a day.

I haven’t had a severe URI since. I think I’ve had a couple of colds, but their quality is completely different than in the past. Hardly even worth mentioning compared to my previous experience. Could be coincidence. However, vitamin D is crucial to enabling the activation of your immune systems T-cells. So an improved immune response makes sense.

Rinsing my sinuses with saline once or twice a day and taking a vitamin supplement twice a day are pretty simple interventions. Probably cost 10-20 cents per day. Extremely low risk of adverse reactions. But if they only improve my experience to the average (and I seem to be doing better than average now), I can expect about 60 more days per year free of URI symptoms. If I’d known about this 25 years ago, that would be a cumulative 4 years saved!

I could have started a whole other startup with the time I spent sick in bed or barely functional at work.

It seems like as our health diagnostic, tracking, and analytic technologies progress, we should be able to identify these situations where simple interventions can result in dramatic health improvements. I imagine we could see a tremendous improvement in economic productivity if my experience is any barometer.

What Seed Funding Bubble?

At the moment, people seem to believe there’s a “bubble” in seed-stage technology funding. Many limited partner investors in VC funds I’ve spoken with have raised the concern and related topics seem popular on Quora (see here, here, and here). However, I’ve examined the data and it argues pretty strongly against a widespread seed-stage bubble.

Rather, I think the increased attention that top startups attract these days induces availability bias. Because Y Combinator and superangels generate pretty intense media coverage, people read more frequently about the few big investments in seed-stage startups. They confuse the true frequency of high valuations with the amount of coverage. Of course, they never read about all the other seed-stage startups that don’t get high valuations.

But if you look at the data on the aggregate amount of seed funding and the average deal size, I think it’s very hard to argue for a general seed-stage bubble. At worst, there may be a very localized bubble centered around consumer Internet startups based in the Bay Area.

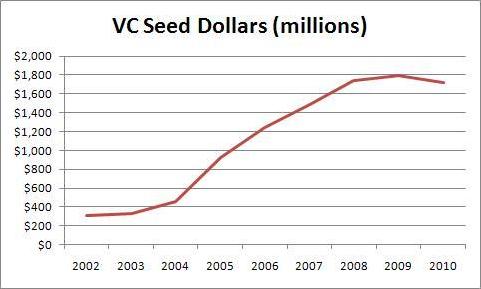

First, look at the amount of seed funding by angels over the last nine years, as reported by the Center for Venture Research. I calculated the amount for each year by multiplying the reported total amount of funding by the reported percentage going to seed and early stage deals. (Note: for some reason the CVR didn’t report the percentage in 2004, so I interpolated that data).

As you can see, the amount of seed funding by angels in 2009-20010 was down by half from its level in 2004-2006. Hard to have a bubble when you’re only investing 50% of the dollars you were at the recent peak. But perhaps it’s a pricing issue and angels are pumping more dollars into each startup. While the CVR doesn’t break down the average investment amount at each stage, we can calculate the average investment amount across all stages and use it as a rough index for what is probably going on at the seed and early stage (the index of 100 corresponds to a $436K investment).

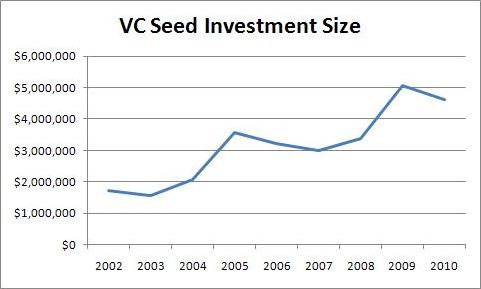

The amount invested in each startup in 2010 was down 35% from its 2006 peak. Now, the investment amount is not the same as the valuation. However, for a variety of reasons (anchoring on historical ownership, capitalization table management, and price equilibrium for the marginal startup), I doubt angels have radically changed the percentage of a company they try to own. So deal size shifts should be a good proxy for valuation shifts.

Now, you might think that VC moves in the seed stage market could be a factor. Probably not, for two reasons. First, VCs account for a much smaller share of the seed stage market. Second, what gets counted as the seed stage in the VC data isn’t what most of us think of as seed stage investments. Check out the seed dollar chart and the average seed investment data from the National Venture Capital Association.

Notice that amount of seed funding by VCs has remained flat for the last three years. Moreover, angels invest dollars in the seed stage at a rate of 3:1 compared to VCs. So VCs probably aren’t contributing to a widespread seed bubble. But the story takes a strange twist if you look at the average size of VCs’ seed stage investments.

The size has increased since 2007. But look at the absolute level! $4M+ seed rounds? I’m starting to think that “seed” does not mean the same thing to VCs as it does to angels and entrepreneurs. Obviously, VCs cannot be affecting what I think of as the seed round very much. However, they could be generating the impression of a bubble by enabling a few “mega-seed” deals. VCs did 373 seed deals in 2010 while angels did around 20,000 (NVCA and CVR data, respectively).

The last factor we have to account for is the superangels. Most of them are not members of the NVCA. However, they probably aren’t counted by the CVR surveys of individual angels and angel groups either. ChubbyBrain has a list of the superangels that seems pretty complete; I can’t think of anyone I consider a superangel who isn’t on it. Of the 16, there are known fund sizes for 13. Two of them (Felcis and and SoftTech VC) are members of the NVCA and thus included in that data. The remaining 11 total $253M.

Now, there are probably some smaller, lesser known superangels not on this list. However, many on the list will not invest all their dollars in a single year and some will invest dollars in follow-on rounds past the seed stage. So I’m confident that $253M is a generous estimate of the superangel dollars that go into the seed stage each year. That’s only about 3% of angels and VCs combined.

Just to really drive the point home, here’s a graph of all seed dollars, assuming superangels did $253M per year in 2009 and 2010. Seed funding is down $5.4B or 40% from it’s peak in 2005! So I don’t believe there’s a bubble.

(The spreadsheet with all my data is here.)

Ratcheting State and Local Taxes

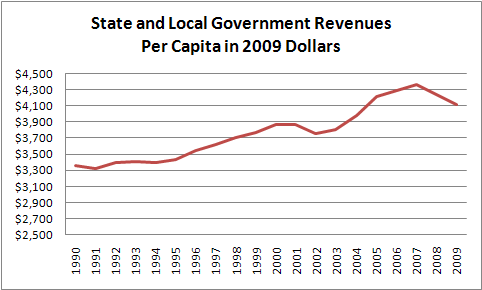

Yesterday, Mark Perry at Carpe Diem looked at state and local tax revenues. Then Don Boudreaux at Cafe Hayek observed they should be adjusted for inflation. Given my previous analysis (here, here, and here), I thought I’d chime in and adjust them for population*:

Over the entire period, real per-capita revenues rose 22%. On the graph, you can clearly see the 2001-2002 and 2008-2009 recessions. If we go peak to peak in the last business cycle, 2001 to 2007, the growth was 13%. If we go trough to trough, 2002-2009, the growth was 9%.

Notice that even from the peak of the previous business cycle in 2001 to the trough of the current business cycle in 2009, real per-capita revenues were still up 6%. So the recession is clearly not the proximal cause of the state and local budget problems.

State and local government agencies have more inflation-adjusted dollars per person today than they did at the peak of the boom in 2000.

Their revenues are consistently growing. The problem is that they can’t get their damned spending under control!

* State and local government tax revenue from this Census Bureau data. 2000-2009 population estimates from here. 1990-1999 from here. Ironically, 2010 population data is not yet available so I couldn’t generate a per-capita datapoint for 2010. I used the CPI-U series for inflation. I did all the analysis in this spreadsheet file.

My Wife Plays a Labor Economist

My wife has an Art History degree from Princeton. However, she often has excellent insights into economics. So I think either (a) one of the reasons she fell in love with me is that she’s a latent economics geek or (b) she loves me so much that she actually pays enough attention to my economics ramblings that some of it rubs off.

About a year ago, she came up with a solution to the “employee union problem”. With the recent public employee union showdown in Wisconsin, I thought I should share it with you. What’s particularly ironic is that she’s out in front with some pretty serious economists in thinking about issue. For example, check out this Wall Street Journal article by Robert Barro, a Harvard professor and author of my undergraduate macroeconomics textbook.

Most people think that unions are simply workers exercising their rights to band together. Actually, this isn’t the case. As Barro points out, unions are a monopolies that are specially exempted from anti-trust law. Moreover, the government actually enforces their monopolies.

The key law here is the National Labor Relations Act (NLRA) as administered by the National Labor Relations Board (NLRB). The NLRB protect the rights of employees to collectively “improve working terms and conditions” regardless of whether they are part of a union. In addition, the NLRB can force all employees at a private firm to join a union, or at least pay union dues. This process is called “certification”. Once a union is certified, it has the presumptive right to negotiate on behalf of all employees at the firm, and collect dues from them, for one year.

After that, it is possible to “decertify” a union. However, the union usually has enough time to consolidate its power and then use it to keep the rank and file in line. When you have monopoly power, you have the opportunity to abuse it. In fact, there’s a whole government agency devoted to investigating such abuses: the Office of Labor-Management Standards. UnionFacts.com has helpfully collated the related crime statistics for 2001-2005. Assuming that only a fraction of abusive behavior faces prosecution, these statistics are pretty sobering.

Of course, federal, state, and local government employees are exempt from the NLRA. You might think this exemption is a good thing for limiting union power. However, what it means in practice is that each level of government is free to offer special treatment to their employee’s unions without oversight from the NLRB. As you can imagine, the politicians and public employee union leaders get nice and cozy. The politicians give the unions a sweet deal and the unions give politicians their political support. Everybody wins. Except ordinary citizens.

Personally, my solution would have been to completely eliminate the government-enforced monopoly of unions. However, I admit this blanket approach could swing power too far towards management in some industries. My wife’s solution is better. She says the unions can get monopolies, but only for a set period of time. Say 3 or 5 years.

From an economics standpoint, this approach is really insightful. First, it removes union leaders’ incentive to form a union just to accumulate power. It will all go away pretty soon. Second, it prevents originally well meaning union leaders from getting corrupted over time. Pretty soon they’re ordinary workers again. Third, it does provide help to those workers who feel management is truly abusing them. They can form a union and get better treatment. When the union’s existence terminates, they can still bargain collectively, just not exclusively. If management tries to screw them again, they will have the example of how to work together. An economist would call this “moving to a better equilibrium”.

I’ll admit this solution isn’t perfect. Some management abuses will slip through the cracks. But I’m pretty confident they’ll be less extensive than the current union abuses. It’s also probably better than my original thought of banning unions altogether. And there’s some small chance my wife’s approach would actually be politically feasible. Nice work, Jane!

State Budget Redux

You may recall my two posts on the California budget back in May 2009. I just haven’t had the heart to dive back into this issue again, even though it’s obviously timely. However, I though it was worth mentioning this article in Reason Magazine highlighted in one of today’s Coyote Blog posts.

Funnily enough, the article was published in the May 2009 issue. So I guess great minds not only think alike, they do so at the same time. What struck my about the article was that they performed a similar exercise to that of my post, which looked at real, per-capita spending in California. Reason compared actual revenues to a constant real, per-capita baseline totaled across all 50 states. Here are the money graphs for all revenues and just taxes:

When times were relatively good, the money was flowing in. So we went on a spending binge. When we hit the recession in 2008, we discovered that this level of real, per-capita revenue was not permanent. But by then, a bunch of people had been accustomed to getting their money from the states and it was hard to cut them off.

One budget watchdog estimates that the states are in a combined $112B budget hole for 2012. As you can see, if we’d stuck to our 2002 baseline, we’d have accumulated plenty of surplus during the good times to plug this hole. But asking a state to save money is like asking an addict to go without a fix.

Production Function Space and Hiring

Previously, I used my Production Function Space (PFS) hypothesis to illuminate the differences between startups, small businesses, and large companies. Now, I’d like to turn my attention to the implications of PFS on a firm’s demand for labor.

I don’t know about you, but a lot of hiring behavior baffles me. I see companies that appear clearly and consistently understaffed or overstaffed, relative to demand for their offerings. Then the hiring process itself is strange. I’ve consistently seen companies burn all kinds of energy and incur all sorts of angst just to come up with a job description. Shouldn’t it be obvious what isn’t getting done? Why do they delegate apparently core aspects of production to contractors? And despite decades of evidence, why do firms insist on using selection procedures like unstructured interviews that aren’t very effective. Also, there’s the mystery of why incentive-based pay doesn’t work in general despite plenty of evidence that humans respond well to incentives in other circumstances.

Can the PFS hypothesis shed any light? I think so. But this hypothesis implies that a firm’s labor decisions are substantially more complicated than we thought, so don’t expect a nice “just-so” story.

I see three big implications if many employees benefit the firm primarily through searching PFS instead of producing goods and services:

- Uncertainty. The payoffs from searching PFS are uncertain. In many cases, they’re really uncertain. You could end up with a curious but unmarketable adhesive or you could end up with the bestselling Post-it notes. You could end up with just another search engine or you could combine it with AdWords and end up with Google. A search over a given region is essentially a call option on implementing discovered production functions.

- Specificity. Economists refer to an asset as “specific” if its usefulness is limited primarily to a certain situation. The classic example is the railroad track leading up to the mouth of a coal mine. I think employees searching PFS are fairly specific. Each firm’s ability to exploit production functions is rather unique. Google and Microsoft can’t do exactly the same things. Moreover, each firm’s strategy for exploring PFS is different. So it takes time for an employee to “tune” himself to searching PFS for a particular firm. All other things being equal, an employee with 3 months on the job is not as effective at searching PFS as an employee with 3 years. And an employee that leaves firm A will not be as effective at searching PFS for firm B for a significant period of time. Think of specificity in searching PFS as a fancy way of justifying the concept of “corporate culture”.

- Network Effect. The number of people searching a given region seems to matter. I’m not at all sure if it’s a network effect, a threshold effect, or something else. But there seems to be a “critical mass” of people necessary to search a coherent region of PFS. You need a certain collection of skills to evaluate the economic feasibility of a production function. The larger a firm’s production footprint and the larger the search area, the greater the collective skill that is required.

Let’s start with hiring and firing decisions. As you can see, firms face a really complex optimization problem when choosing how many people to employ and with what skills. Suppose demand for a firm’s products suddenly declines. What’s the optimal response? Due to the the network effect, firing x% of the workforce reduces the ability to search PFS by more than x%. Due to specificity, this reduction in capability will last much longer than the time it takes to rehire the same number of people. Thus, waiting to see if the drop in demand is temporary or permanent provides substantial option value. Of course, a small firm doesn’t have much cushion, so may have to lay off people anyway.

Thus I predict a sudden drop in demand will result in disproportionately low or significantly delayed layoffs, and the disproportion or delay will be positively correlated with firm size. Moreover, firms will tend to concentrate layoffs among direct production workers to minimize the effect on searching PFS. This tendency may explain why they delegate some apparently core functions. Being able to flexibly adjust those direct costs preserves the ability to search PFS. This hypothesis implies that the more volatile the demand for a firms’ products, the more they will outsource direct production.

Conversely, what should a firm do if demand suddenly increases? Based on the PFS hypothesis, I have three predictions: the firm will (1) delay hiring to see if the demand increase is sustained, (2) “over hire” relative to the size of the demand increase, and (3) hire a disproportionate number of people outside of core production. The reason is simple, diversification. Due to uncertainty, the best way for a firm to ensure its long-term survival is to have a large portfolio of ongoing PFS searches. Extra dollars should therefore be allocated to PFS searching labor rather than capital or direct production labor. However, because a firm knows that it will be reluctant to fire in the future, it will initially be conservative in deciding to hire.

It seems like these predictions should be testable. I wish I had a research assistant to go through the available data and crank through some econometric analysis. I’m thinking the next step is to work through the implications of PFS searching on employee behavior. Unless anyone has other thoughts.

Production Function Space and Government Inefficiency

This weekend, I was at a party chatting with two of my friends who have PhD’s in Education. They were explaining the sources of the inefficiency they see in the public school system. That’s when I had yet another Production Function Space (PFS) epiphany.

Recall my previous discussion about how I think large companies devote much of their energy to searching PFS rather than implementing specific production functions. However, in listening to how public education works, the contrast struck me.

Only a tiny sliver of effort goes to searching PFS in public education. Instead, the vast majority of overhead consists of two tasks. First, because there’s no market discipline, government organizations use a rigid system of rules in an effort prevent waste. So they have a bunch of people and processes dedicated to enforcing these rules: the bureaucracy. Of course, in an ever-changing landscape, these rules aren’t anywhere close to optimal for long. But hey, they are the rules. So a big chunk of effort that would go towards innovation in a commercial organization actually goes toward preventing innovation in a government organization. Because there’s no systematic way to tell waste from innovation without a market.

Without market signals, there’s also no obvious way for employees to improve their position by improving organizational performance. But don’t count out human ingenuity. That’s the second substitute for searching PFS: improving job security and job satisfaction. So you see workers seeking credentialing, tenure, guaranteed benefit plans and limitations on the number of hours spent in staff meetings.

From what I’ve read, this observation generalizes to other government agencies. If searching PFS is a major source of continuous improvement in commercial organizations, it’s conspicuous absence in government organizations means they are even less efficient than we thought. We don’t just lose out on the discipline of market signals today. We lose out on the inspiration they provide for improvement tomorrow.

More Angel Investing Returns

According to our Web statistics, my post on Angel Investing Returns was pretty popular, so I thought I’d dive a little deeper into the process of extracting information from this data set. At the end of the last post, I hinted that there might be some value in, “…analyzing subsets of the AIPP data…” Why would you want to do this? To test hypotheses about angel investing.

Now, you must be careful here. You should always construct your hypotheses before looking at the data. Otherwise, it’s hard to know if this particular data is confirming your hypothesis or if you molded your hypothesis to fit this particular data. You already have the challenge of assuming that past results will predict future results. Don’t add to this burden by opening yourself to charges of “data mining”.

I can go ahead and play with this data all I want. I already used it to “backtest” RSCM‘s investment strategy. We developed it by reading research papers, analyzing other data sources, and running investment simulations. When we found the AIPP download page, it was like Christmas: a chance to test our model against new data. So I already took my shot. But if you’re thinking about using the AIPP data in a serious way, you might want to stop reading unless you’ve written your hypotheses down already. As they say, “Spoiler alert.”

But if you’re just curious, you might find my three example hypothesis tests interesting. They’re all based loosely on questions that arose while doing research for RSCM.

Hypothesis 1: Follow On Investments Don’t Improve Returns

It’s an article of faith in the angel and VC community that you should “double down on your winners” by making follow on investments in companies that are doing well. However, basic portfolio and game theory made me skeptical. If early stage companies are riskier, they should have higher returns. Investing in later stages just mixes higher returns with lower returns, reducing the average. Now, some people think they have inside information that allows them to make better follow-on decisions and outperform the later stage average. Of course, other investors know this too. So if you follow on in some companies but not others, they will take it as a signal that the others are losers. I don’t think an active angel investor could sustain much of an advantage for long.

But let’s see what the AIPP data says. I took the Excel file from my last post and simply blanked out all the records with any follow on investment entries. The resulting file with 330 records is here. The IRR was 62%, the payout multiple was 3.2x, and the hold time was 3.4 years. That’s a huge edge over 30% and 2.4x!

Now, let’s not get too excited here. There’s a difference between deals where there was no follow on and deals where an investor was using a no-follow-on strategy. We don’t know why an AIPP deal didn’t have any follow on. It could be that the company was so successful it didn’t need more money. Of course, the fact that this screen still yields 330 out of 452 records argues somewhat against a very specific sample bias, but there could easily be more subtle issues.

Given the magnitude of the difference, I do think we can safely say that the conventional wisdom doesn’t hold up. You don’t need to do follow on. However, without data on investor strategies, there’s still some room for interpretation on whether a no-follow-on strategy actually improves returns.

Hypothesis 2: Small Investments Have Better Returns than Large Ones

Another common VC mantra is that you should “put a lot of money to work” in each investment. To me, this strategy seems more like a way to reduce transaction costs than improve outcomes, which is fine, but the distinction is important. Smaller investments probably occur earlier so they should be higher risk and thus higher return. Also, if everyone is trying to get into the larger deals, smaller investments may be less competitive and thus offer greater returns.

I chose $300K as the dividing line between small and large investments, primarily because that was our original forecast of average investment for RSCM (BTW, we have revised this estimate downward based on recent trends in startup costs and valuations). The Excel file with 399 records of “small” investments is here. The IRR was 39% and the payout multiple was 4.0x. Again, a huge edge over the entire sample! Interestingly, less of an edge in IRR but more of an edge in multiple than the no-follow-on test. But smaller investments may take longer to pay out if they are also earlier. IRR really penalizes hold time.

Interesting side note. When I backtested the RSCM strategy, I keyed on investment “stage” as the indicator of risky early investments. Seeing as how this was the stated definition of “stage”, I thought I was safe. Unfortunately, it turned out that almost 60% of the records had no entry for “stage”. Also, many of the records that did have entries were strange. A set of 2002 “seed” investments in one software company for over $2.5M? A 2003 “late growth” investment in a software company of only $50K? My guess is that the definition wasn’t clear enough to investors filling out the survey. But I had committed to my hypothesis already and went ahead with the backtest as specified. Oh well, live and learn.

Hypothesis 3: Post-Crash Returns Are No Different than Pre-Crash Returns

As you probably remember, there was a bit of a bubble in technology startups that popped at the beginning of 2001. You might think this bubble would make angel investments from 2001 on worse. However, my guess was that returns wouldn’t break that cleanly. Sure, many 1998 and some 1999 investments might have done very well. But other 1999 and most 2000 investments probably got caught in the crash. Conversely, if you invested in 2001 and 2002 when everybody else was hunkered down, you could have picked up some real bargains.

The Excel file with 168 records of investments from 2001 and later is here. 23% IRR and 1.7x payout multiple. Ouch! Was I finally wrong? Maybe. Maybe not. The first problem is that there are only 168 records. The sample may be too small. But I think the real issue is that the dataset “cut off” many of the successful post-bubble investments because it ends in 2007.

To test this explanation, I examined the original AIPP data file. I filtered it to include only investment records that had an investment date and where time didn’t run backwards. That file is here. It contains 304 records of investments before 2001 and 344 records of investments in 2001 or later. My sample of exited investments contains 284 records from before 2001 and 168 records from 2001 or later. So 93% of the earlier investments have corresponding exit records and 49% of the later ones do. Note that the AIPP data includes bankruptcies as exits.

So I think we have an explanation. About half of the later investments hadn’t run their course yet. Because successes take longer than failures, this sample over-represents failures. I wish I had thought of that before I ran the test! But it would be disingenuous not to publish the results now.

Conclusion

So I think we’ve answered some interesting questions about angel investing. More important, the process demonstrates why we need to collect much more data in this area. According to the Center for Venture Research, there are about 50K angel investments per year in the US. The AIPP data set has under 500 exited investments covering a decades long span. We could do much more hypothesis testing, with several iterations of refinements, if we had a larger sample.

Startups, Employment, and Growth

In regards to my posts on how startups help drive both employment and growth, a couple of people have pointed me to this essay in BusinessWeek by Andy Grove. He says that:

- We have a, “…misplaced faith in the power of startups to create U.S. jobs.”

- “The scaling process is no longer happening in the U.S. And as long as that’s the case, plowing capital into young companies that build their factories elsewhere will continue to yield a bad return in terms of American jobs.”

- “Scaling isn’t easy. The investments required are much higher than in the invention phase.”

His basic argument is more sophisticated than the typical “America no longer makes things” rhetoric. It boils down to network effect. If the US doesn’t manufacture technology-intensive products, we incur two penalties because we lack the corresponding network of skills: (a) in technology areas that exist today, we will not be able to innovate as effectively in the future and (b) we will lack the knowledge it takes to scale tomorrow’s technology areas altogether. I don’t believe this argument for all the usual economic reasons, but let’s assume it’s true. Would the US be in trouble?

Grove makes a lot of anecdotal observations and examines some manufacturing employment numbers. However, I think it’s a mistake to generalize too much from any individual case or particular metric. The economy is diverse enough to provide examples of almost any condition and we expect a priori to find specialization within sectors. We should examine a variety of metrics to get the full picture of whether America is losing its ability to “scale up”. Bear with me, Grove’s essay is four pages long so it will take me a while to fully address it.

Manufacturing Jobs

First, let’s look at the assertion that we aren’t creating jobs relevant to scaling up technologies. The real question is: what kinds of jobs build these skills? Does any old manufacturing job help? I don’t think Foxconn-suicide inducing manufacturing jobs are what we really want. Maybe what we want is “good” or “high-skill” manufacturing jobs? Well take a look at this graph from Carpe Diem of real manufacturing output per worker:

That’s right, the average manufacturing worker in the US produces almost $280K worth of stuff per year. More than 3x what his father or her mother produced 40 years ago. That should certainly support high quality jobs. But is the quality of manufacturing jobs actually increasing? Just check out these graphs of manufacturing employment by level of education from the Heritage Foundation:

That’s right, the average manufacturing worker in the US produces almost $280K worth of stuff per year. More than 3x what his father or her mother produced 40 years ago. That should certainly support high quality jobs. But is the quality of manufacturing jobs actually increasing? Just check out these graphs of manufacturing employment by level of education from the Heritage Foundation:

This suggests to me that we’re replacing a bunch of low-skilled workers with fewer high-skilled workers. But that’s good. It means we’re creating jobs that require more knowledge (aka “human capital” that we can leverage). Look at the 44% increase in manufacturing workers with advanced degrees! Contrary to Grove, it seems like we’re accumulating a lot of high-powered know-how about how to scale up.

Manufacturing Capability

Now you might be thinking that we could still be losing ground if the productivity of our high-end manufacturing jobs isn’t enough to make up for job losses on the low-end. In Grove’s terms, our critical mass of scaling-up ability might be eroding. Not the case. Just consider the statistics on industrial production from the Federal Reserve. This table shows that overall industrial production in the US has increased 68% in real terms over the 25 years from 1986 to 2010, which is 2.1% per year.

“Aha!” you say, “But Grove is talking about the recent history of technology-related products.” Then how about semiconductors and related equipment from 2001-2010? That’s the sector Grove came from. There, we have a 334% increase in output, or 16% per year . Conveniently, the Fed also has a category covering all of hi-tech manufacturing output (HITEK2): 163% increase over the same period, or 10% per year. Now the US economy only grew at 1.5% per year from 2001 to 2010 (actually 4Q00 to 3Q10 because the 4Q10 GDP numbers aren’t out). So in the last ten years, our ability to manufacture high-technology products increased at almost 7x the rate of our overall economy. We’re actually getting much better at scaling up new technologies!

I can think of one other potential objection. Lack of investment in future production. There is a remote possibility that, despite the terrific productivity and output growth in US high-tech manufacturing today, we won’t be able to maintain this strength in the future. I think the best measure of expected future capability is foreign direct investment (FDI). These are dollars that companies in one country invest directly in business ventures of another country. They do NOT include investments in financial instruments. Because these dollars are coming from outside the country, they represent an objective assessment of which countries offer good opportunities. So let’s compare net inflows of FDI for China and the US using World Bank data from 2009. For China, we have $78B. For the US, we have $135B. This isn’t terribly surprising given the relative sizes of the economies, but there certainly doesn’t seem to be any market wisdom that the US is going to lose lots of important capabilities in the future.

Will China outcompete the US in some hi-tech industries? Absolutely. But that’s just what we expect from the theory of comparative advantage. They will specialize in the areas where they have advantages and we will specialize in other areas where we have advantages. Both economies will benefit from this specialization. An economist would be very surprised indeed if Grove couldn’t point to certain industries where China is “winning”. However, the data clearly shows that China is not poised to dominate hi-tech manufacturing across the board.

Startup Job Creation

So we’ve addressed Grove’s concerns about the US losing its ability to “scale up”. Let’s move on to the issue of startups. Remember, he said that startups, “…will continue to yield a bad return in terms of American jobs.” As I posted before, startups create a net of 3M jobs per year. Without startups, job growth would be negative. If Grove cares about jobs, he should care about startups. The data is clear.

The one plausible argument I’ve seen against this compelling data is that most of these jobs evaporate. It is true that many startups fail. The question is, what happens on average? Well, the Kauffman Foundation has recently done a study on that too, using Census Bureau Business Dynamic Statistics data. They make a key point about what happens as a cohort of startups matures:

The upper line represents the number of jobs on average at all startups, relative to their year of birth. The way to interpret the graph is that a lot of startups fail, but the ones that succeed grow enough to support about 2/3 of the initial job creation over the long term; 2/3 appears to be the asymptote of the top line. The number of firms continues declining, but job growth at survivors makes up the difference starting after about 15 years. For example, a bunch of startups founded in the late 90s imploded. But Google keeps growing and hiring. Same as in the mid 00s for Facebook. Bottom line: of the 3M jobs created by startups each year, about 2M of them are “permanent” in some sense. The other 1M get shifted to startups in later years. So startups are in fact a reliable source of employment.

I’d like to make one last point, not about employment per se, but about capturing the economic gains from startups. If we generalize Grove’s point, we might be worried that the US develops innovations, but other countries capture the economic gains. To dispel this concern, we need only refer back to my post on the economic gains attributable to startups, using data across states in the US. Recall that this study looked at differing rates of startup formation in states to conclude that a 5% increase in new firm births increases the GDP growth rate by 0.5 percentage points.

I would argue that it’s much more likely that a state next door could “siphon off” innovation gains from its neighbor than a distant country could siphon off innovation gains from the US: (a) the logistics make transactions more convenient, (b) there are no trade barriers between states, and (c) workers in New Mexico are a much closer substitute for workers in Texas than workers in China. But the study clearly shows that states are getting a good economic return from startups formed within their boundaries. Now, I’m certain there are positive “spillover” effects to neighboring states. But the states where the startups are located get a tremendous benefit even with the ease of trade among states.

Conclusion

I think it’s pretty clear that, even if you accept Grove’s logic, there’s no sign that the US is losing its ability to scale up. However, I would be remiss if I didn’t point out my disagreement with the logic. I’ve seen no evidence of a need to be near manufacturing to be able to innovate. In fact, every day I see evidence against it.

I live in Palo Alto. As far as I know, we don’t actually manufacture any technology products in significant quantities any more. Yet lots of people who live and work here make a great living focusing on technology innovation. As Don Boudreaux is fond of pointing out on his blog and in letters to the mainstream media, there is no difference in the trade between Palo Alto and San Jose and the trade between Palo Alto and Shanghai. In fact, I know lots of people in the technology industry who work on innovations here in the Bay Area and then fly to Singapore, Taipei, or Shanghai to work with people at the factories cranking out units.

Certainly, I acknowledge that a government can affect the ability of its citizens to compete in the global economy. But the best way to support its citizens is to reduce the barriers to creating new businesses and then enable those businesses to access markets, whether those markets are down the freeway or across the world. One of the worst thing a government could do is fight a trade war, which is what Grove advocates in the third-to-last paragraph of his essay.

The ingenuity of American engineers and entrepreneurs is doing just fine, as my data shows. We don’t need an industrial policy.