Archive for January 2011

Production Function Space and Government Inefficiency

This weekend, I was at a party chatting with two of my friends who have PhD’s in Education. They were explaining the sources of the inefficiency they see in the public school system. That’s when I had yet another Production Function Space (PFS) epiphany.

Recall my previous discussion about how I think large companies devote much of their energy to searching PFS rather than implementing specific production functions. However, in listening to how public education works, the contrast struck me.

Only a tiny sliver of effort goes to searching PFS in public education. Instead, the vast majority of overhead consists of two tasks. First, because there’s no market discipline, government organizations use a rigid system of rules in an effort prevent waste. So they have a bunch of people and processes dedicated to enforcing these rules: the bureaucracy. Of course, in an ever-changing landscape, these rules aren’t anywhere close to optimal for long. But hey, they are the rules. So a big chunk of effort that would go towards innovation in a commercial organization actually goes toward preventing innovation in a government organization. Because there’s no systematic way to tell waste from innovation without a market.

Without market signals, there’s also no obvious way for employees to improve their position by improving organizational performance. But don’t count out human ingenuity. That’s the second substitute for searching PFS: improving job security and job satisfaction. So you see workers seeking credentialing, tenure, guaranteed benefit plans and limitations on the number of hours spent in staff meetings.

From what I’ve read, this observation generalizes to other government agencies. If searching PFS is a major source of continuous improvement in commercial organizations, it’s conspicuous absence in government organizations means they are even less efficient than we thought. We don’t just lose out on the discipline of market signals today. We lose out on the inspiration they provide for improvement tomorrow.

More Angel Investing Returns

According to our Web statistics, my post on Angel Investing Returns was pretty popular, so I thought I’d dive a little deeper into the process of extracting information from this data set. At the end of the last post, I hinted that there might be some value in, “…analyzing subsets of the AIPP data…” Why would you want to do this? To test hypotheses about angel investing.

Now, you must be careful here. You should always construct your hypotheses before looking at the data. Otherwise, it’s hard to know if this particular data is confirming your hypothesis or if you molded your hypothesis to fit this particular data. You already have the challenge of assuming that past results will predict future results. Don’t add to this burden by opening yourself to charges of “data mining”.

I can go ahead and play with this data all I want. I already used it to “backtest” RSCM‘s investment strategy. We developed it by reading research papers, analyzing other data sources, and running investment simulations. When we found the AIPP download page, it was like Christmas: a chance to test our model against new data. So I already took my shot. But if you’re thinking about using the AIPP data in a serious way, you might want to stop reading unless you’ve written your hypotheses down already. As they say, “Spoiler alert.”

But if you’re just curious, you might find my three example hypothesis tests interesting. They’re all based loosely on questions that arose while doing research for RSCM.

Hypothesis 1: Follow On Investments Don’t Improve Returns

It’s an article of faith in the angel and VC community that you should “double down on your winners” by making follow on investments in companies that are doing well. However, basic portfolio and game theory made me skeptical. If early stage companies are riskier, they should have higher returns. Investing in later stages just mixes higher returns with lower returns, reducing the average. Now, some people think they have inside information that allows them to make better follow-on decisions and outperform the later stage average. Of course, other investors know this too. So if you follow on in some companies but not others, they will take it as a signal that the others are losers. I don’t think an active angel investor could sustain much of an advantage for long.

But let’s see what the AIPP data says. I took the Excel file from my last post and simply blanked out all the records with any follow on investment entries. The resulting file with 330 records is here. The IRR was 62%, the payout multiple was 3.2x, and the hold time was 3.4 years. That’s a huge edge over 30% and 2.4x!

Now, let’s not get too excited here. There’s a difference between deals where there was no follow on and deals where an investor was using a no-follow-on strategy. We don’t know why an AIPP deal didn’t have any follow on. It could be that the company was so successful it didn’t need more money. Of course, the fact that this screen still yields 330 out of 452 records argues somewhat against a very specific sample bias, but there could easily be more subtle issues.

Given the magnitude of the difference, I do think we can safely say that the conventional wisdom doesn’t hold up. You don’t need to do follow on. However, without data on investor strategies, there’s still some room for interpretation on whether a no-follow-on strategy actually improves returns.

Hypothesis 2: Small Investments Have Better Returns than Large Ones

Another common VC mantra is that you should “put a lot of money to work” in each investment. To me, this strategy seems more like a way to reduce transaction costs than improve outcomes, which is fine, but the distinction is important. Smaller investments probably occur earlier so they should be higher risk and thus higher return. Also, if everyone is trying to get into the larger deals, smaller investments may be less competitive and thus offer greater returns.

I chose $300K as the dividing line between small and large investments, primarily because that was our original forecast of average investment for RSCM (BTW, we have revised this estimate downward based on recent trends in startup costs and valuations). The Excel file with 399 records of “small” investments is here. The IRR was 39% and the payout multiple was 4.0x. Again, a huge edge over the entire sample! Interestingly, less of an edge in IRR but more of an edge in multiple than the no-follow-on test. But smaller investments may take longer to pay out if they are also earlier. IRR really penalizes hold time.

Interesting side note. When I backtested the RSCM strategy, I keyed on investment “stage” as the indicator of risky early investments. Seeing as how this was the stated definition of “stage”, I thought I was safe. Unfortunately, it turned out that almost 60% of the records had no entry for “stage”. Also, many of the records that did have entries were strange. A set of 2002 “seed” investments in one software company for over $2.5M? A 2003 “late growth” investment in a software company of only $50K? My guess is that the definition wasn’t clear enough to investors filling out the survey. But I had committed to my hypothesis already and went ahead with the backtest as specified. Oh well, live and learn.

Hypothesis 3: Post-Crash Returns Are No Different than Pre-Crash Returns

As you probably remember, there was a bit of a bubble in technology startups that popped at the beginning of 2001. You might think this bubble would make angel investments from 2001 on worse. However, my guess was that returns wouldn’t break that cleanly. Sure, many 1998 and some 1999 investments might have done very well. But other 1999 and most 2000 investments probably got caught in the crash. Conversely, if you invested in 2001 and 2002 when everybody else was hunkered down, you could have picked up some real bargains.

The Excel file with 168 records of investments from 2001 and later is here. 23% IRR and 1.7x payout multiple. Ouch! Was I finally wrong? Maybe. Maybe not. The first problem is that there are only 168 records. The sample may be too small. But I think the real issue is that the dataset “cut off” many of the successful post-bubble investments because it ends in 2007.

To test this explanation, I examined the original AIPP data file. I filtered it to include only investment records that had an investment date and where time didn’t run backwards. That file is here. It contains 304 records of investments before 2001 and 344 records of investments in 2001 or later. My sample of exited investments contains 284 records from before 2001 and 168 records from 2001 or later. So 93% of the earlier investments have corresponding exit records and 49% of the later ones do. Note that the AIPP data includes bankruptcies as exits.

So I think we have an explanation. About half of the later investments hadn’t run their course yet. Because successes take longer than failures, this sample over-represents failures. I wish I had thought of that before I ran the test! But it would be disingenuous not to publish the results now.

Conclusion

So I think we’ve answered some interesting questions about angel investing. More important, the process demonstrates why we need to collect much more data in this area. According to the Center for Venture Research, there are about 50K angel investments per year in the US. The AIPP data set has under 500 exited investments covering a decades long span. We could do much more hypothesis testing, with several iterations of refinements, if we had a larger sample.

Startups, Employment, and Growth

In regards to my posts on how startups help drive both employment and growth, a couple of people have pointed me to this essay in BusinessWeek by Andy Grove. He says that:

- We have a, “…misplaced faith in the power of startups to create U.S. jobs.”

- “The scaling process is no longer happening in the U.S. And as long as that’s the case, plowing capital into young companies that build their factories elsewhere will continue to yield a bad return in terms of American jobs.”

- “Scaling isn’t easy. The investments required are much higher than in the invention phase.”

His basic argument is more sophisticated than the typical “America no longer makes things” rhetoric. It boils down to network effect. If the US doesn’t manufacture technology-intensive products, we incur two penalties because we lack the corresponding network of skills: (a) in technology areas that exist today, we will not be able to innovate as effectively in the future and (b) we will lack the knowledge it takes to scale tomorrow’s technology areas altogether. I don’t believe this argument for all the usual economic reasons, but let’s assume it’s true. Would the US be in trouble?

Grove makes a lot of anecdotal observations and examines some manufacturing employment numbers. However, I think it’s a mistake to generalize too much from any individual case or particular metric. The economy is diverse enough to provide examples of almost any condition and we expect a priori to find specialization within sectors. We should examine a variety of metrics to get the full picture of whether America is losing its ability to “scale up”. Bear with me, Grove’s essay is four pages long so it will take me a while to fully address it.

Manufacturing Jobs

First, let’s look at the assertion that we aren’t creating jobs relevant to scaling up technologies. The real question is: what kinds of jobs build these skills? Does any old manufacturing job help? I don’t think Foxconn-suicide inducing manufacturing jobs are what we really want. Maybe what we want is “good” or “high-skill” manufacturing jobs? Well take a look at this graph from Carpe Diem of real manufacturing output per worker:

That’s right, the average manufacturing worker in the US produces almost $280K worth of stuff per year. More than 3x what his father or her mother produced 40 years ago. That should certainly support high quality jobs. But is the quality of manufacturing jobs actually increasing? Just check out these graphs of manufacturing employment by level of education from the Heritage Foundation:

That’s right, the average manufacturing worker in the US produces almost $280K worth of stuff per year. More than 3x what his father or her mother produced 40 years ago. That should certainly support high quality jobs. But is the quality of manufacturing jobs actually increasing? Just check out these graphs of manufacturing employment by level of education from the Heritage Foundation:

This suggests to me that we’re replacing a bunch of low-skilled workers with fewer high-skilled workers. But that’s good. It means we’re creating jobs that require more knowledge (aka “human capital” that we can leverage). Look at the 44% increase in manufacturing workers with advanced degrees! Contrary to Grove, it seems like we’re accumulating a lot of high-powered know-how about how to scale up.

Manufacturing Capability

Now you might be thinking that we could still be losing ground if the productivity of our high-end manufacturing jobs isn’t enough to make up for job losses on the low-end. In Grove’s terms, our critical mass of scaling-up ability might be eroding. Not the case. Just consider the statistics on industrial production from the Federal Reserve. This table shows that overall industrial production in the US has increased 68% in real terms over the 25 years from 1986 to 2010, which is 2.1% per year.

“Aha!” you say, “But Grove is talking about the recent history of technology-related products.” Then how about semiconductors and related equipment from 2001-2010? That’s the sector Grove came from. There, we have a 334% increase in output, or 16% per year . Conveniently, the Fed also has a category covering all of hi-tech manufacturing output (HITEK2): 163% increase over the same period, or 10% per year. Now the US economy only grew at 1.5% per year from 2001 to 2010 (actually 4Q00 to 3Q10 because the 4Q10 GDP numbers aren’t out). So in the last ten years, our ability to manufacture high-technology products increased at almost 7x the rate of our overall economy. We’re actually getting much better at scaling up new technologies!

I can think of one other potential objection. Lack of investment in future production. There is a remote possibility that, despite the terrific productivity and output growth in US high-tech manufacturing today, we won’t be able to maintain this strength in the future. I think the best measure of expected future capability is foreign direct investment (FDI). These are dollars that companies in one country invest directly in business ventures of another country. They do NOT include investments in financial instruments. Because these dollars are coming from outside the country, they represent an objective assessment of which countries offer good opportunities. So let’s compare net inflows of FDI for China and the US using World Bank data from 2009. For China, we have $78B. For the US, we have $135B. This isn’t terribly surprising given the relative sizes of the economies, but there certainly doesn’t seem to be any market wisdom that the US is going to lose lots of important capabilities in the future.

Will China outcompete the US in some hi-tech industries? Absolutely. But that’s just what we expect from the theory of comparative advantage. They will specialize in the areas where they have advantages and we will specialize in other areas where we have advantages. Both economies will benefit from this specialization. An economist would be very surprised indeed if Grove couldn’t point to certain industries where China is “winning”. However, the data clearly shows that China is not poised to dominate hi-tech manufacturing across the board.

Startup Job Creation

So we’ve addressed Grove’s concerns about the US losing its ability to “scale up”. Let’s move on to the issue of startups. Remember, he said that startups, “…will continue to yield a bad return in terms of American jobs.” As I posted before, startups create a net of 3M jobs per year. Without startups, job growth would be negative. If Grove cares about jobs, he should care about startups. The data is clear.

The one plausible argument I’ve seen against this compelling data is that most of these jobs evaporate. It is true that many startups fail. The question is, what happens on average? Well, the Kauffman Foundation has recently done a study on that too, using Census Bureau Business Dynamic Statistics data. They make a key point about what happens as a cohort of startups matures:

The upper line represents the number of jobs on average at all startups, relative to their year of birth. The way to interpret the graph is that a lot of startups fail, but the ones that succeed grow enough to support about 2/3 of the initial job creation over the long term; 2/3 appears to be the asymptote of the top line. The number of firms continues declining, but job growth at survivors makes up the difference starting after about 15 years. For example, a bunch of startups founded in the late 90s imploded. But Google keeps growing and hiring. Same as in the mid 00s for Facebook. Bottom line: of the 3M jobs created by startups each year, about 2M of them are “permanent” in some sense. The other 1M get shifted to startups in later years. So startups are in fact a reliable source of employment.

I’d like to make one last point, not about employment per se, but about capturing the economic gains from startups. If we generalize Grove’s point, we might be worried that the US develops innovations, but other countries capture the economic gains. To dispel this concern, we need only refer back to my post on the economic gains attributable to startups, using data across states in the US. Recall that this study looked at differing rates of startup formation in states to conclude that a 5% increase in new firm births increases the GDP growth rate by 0.5 percentage points.

I would argue that it’s much more likely that a state next door could “siphon off” innovation gains from its neighbor than a distant country could siphon off innovation gains from the US: (a) the logistics make transactions more convenient, (b) there are no trade barriers between states, and (c) workers in New Mexico are a much closer substitute for workers in Texas than workers in China. But the study clearly shows that states are getting a good economic return from startups formed within their boundaries. Now, I’m certain there are positive “spillover” effects to neighboring states. But the states where the startups are located get a tremendous benefit even with the ease of trade among states.

Conclusion

I think it’s pretty clear that, even if you accept Grove’s logic, there’s no sign that the US is losing its ability to scale up. However, I would be remiss if I didn’t point out my disagreement with the logic. I’ve seen no evidence of a need to be near manufacturing to be able to innovate. In fact, every day I see evidence against it.

I live in Palo Alto. As far as I know, we don’t actually manufacture any technology products in significant quantities any more. Yet lots of people who live and work here make a great living focusing on technology innovation. As Don Boudreaux is fond of pointing out on his blog and in letters to the mainstream media, there is no difference in the trade between Palo Alto and San Jose and the trade between Palo Alto and Shanghai. In fact, I know lots of people in the technology industry who work on innovations here in the Bay Area and then fly to Singapore, Taipei, or Shanghai to work with people at the factories cranking out units.

Certainly, I acknowledge that a government can affect the ability of its citizens to compete in the global economy. But the best way to support its citizens is to reduce the barriers to creating new businesses and then enable those businesses to access markets, whether those markets are down the freeway or across the world. One of the worst thing a government could do is fight a trade war, which is what Grove advocates in the third-to-last paragraph of his essay.

The ingenuity of American engineers and entrepreneurs is doing just fine, as my data shows. We don’t need an industrial policy.

Angel Investing Returns

In my work for RSCM, one of the key questions is, “What is the return of angel investing?” There’s some general survey data and a couple of angel groups publish their returns, but the only fine-grained public dataset I’ve seen comes from Rob Wiltbank of Willamette University and the Kauffman Foundation’s Angel Investor Performance Project (AIPP).

In this paper, Wiltbank and Boeker calculate the internal rate of return (IRR) of AIPP investments as 27%, using the average payoff of 2.6x and the average hold time of 3.5 years. Now, the arithmetic is clearly wrong: 1.27^3.5 = 2.3. The correctly calculated IRR using this methodology is 31%. DeGenarro et al report (page 10) that this discrepancy is due to the fact that Wiltbank and Boeker did not weight investments appropriately.

In any case, the entire methodology of using average payoffs and hold times is somewhat iffy. When I read the paper, I immediately had flashbacks to my first engineering-economics class at Stanford. There was a mind-numbing problem set that beat into our skulls the fact that IRR calculations are extremely sensitive to the timing of cash outflows and inflows. I eventually got a Master’s degree in that department, so loyally adopted IRR sensitivity as a pet peeve.

To calculate the IRR for the AIPP dataset, what we really want is to account for the year of every outflow and inflow. The first step is to get a clean dataset. I started by downloading the public AIPP data. I then followed a three step cleansing process:

- Select only those records that correspond to an exited investment.

- Delete all records that do not have both dates and amounts for the investment and the exit.

- Delete all records where time runs backwards (e.g., payout before investment).

The result was 452 records. A good-sized sample. The next step was to normalize all investments so they started in the year 2000. While not strictly necessary, it greatly simplified the mechanics of collating outflows and inflows by year. Finally, I had to interpolate dates in two types of cases:

- While the dataset includes the years of the first and second follow on investment, it does not include the year for the “followxinvest”. For the affected 12 records, I interpolated by calculating the halfway point between the previous investment and the exit, rounding down. Note that this is a conservative assumption. Rounding down pushes the outflow associated with the investment earlier, which lowers the IRR.

- For 78 records, there are “midcash” entries where investors received some payout before the final exit. Unfortunately, there is no year associated with this payout. A conservative assumption pushes inflows later, so I assumed that the intermediate payout occurred either 0, 1, or 2 years before the final exit. I calculated the midpoint between the last investment and the final exit and rounded down. If it was more than 2 years before the final exit, I used 2 years.

With these steps completed, I simply added up outflows and inflows for every year and used the Excel IRR calculation.

The result was an IRR of 30% and a payoff multiple of 2.4x with an average hold time of 3.6 years.

Please note that this multiple is slightly lower than the 2.6x and the hold time is slightly higher than the 3.5 years Wiltbank and Boeker calculated for the entire dataset. Thus, my results do not depend on accidentally cherry-picking high-returning, quick-payout investments. If you want to double-check my work, you can download the Excel file here.

All in all, a satisfying result. Not too different from what’s other people have published, but I feel much more confident in the number. For anyone analyzing subsets of the AIPP data, I’ve found that my Excel file makes it pretty easy to calculate those returns. Just zero out all records you don’t care about by selecting the row and hitting the “Delete” key. The return results will update correctly. But don’t do a “Delete Row”. Then a bunch of the cell references will be broken. [Update 1/27/11: I’ve done a follow up post on using this method to test various hypotheses.]

Startups, Small Businesses, and Large Companies

One of the reasons I like my Production Function Space (PFS) hypothesis, is that it clarifies a lot of issues that have puzzled me for a while. For example, as part of my work on seed-stage startup investing with RSCM, I have struggled with two questions: (1) what’s the difference between a startup and a small business and (2) why do some large companies have initiatives like venture groups and startup incubators?

To answer these questions, I’m going to have to get a little mathematical. Don’t worry. No derivatives or integrals, but I need to introduce some notation to keep the story straight. First, let’s define production footprint and search area.

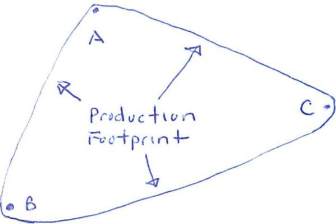

A production footprint is the surface that encloses a set of points in PFS.* If A is a production function for basketballs, B is a production function for baseballs, and C is a production function for golfballs, then pf(ABC) looks like this in two dimensions:

We can also talk about the production footprint of a company like Google. pf(Google) is the surface that encloses all the production functions that Google currently uses.

A search area is the surface defined by extending the production footprint outward by the search radius.** Imagine that we wanted to see if making basketballs, baseballs, and golfballs would enable a company to make footballs using production function F, we might want to compute sa(r, ABC). It looks like this in two dimensions:

We can also talk about search areas for a company. sa(r,Google) is the surface enclosing all the points in PFS within r units of Google’s current production footprint. If we write just sa(Google), we mean search area using Google’s actual search radius.

With these two basic concepts in place, I can now easily answer questions (1) and (2). SU stands for startup, SB stands for small business, and LC stands for large company. So what’s the difference between a startup and a small business? Well, when they are founded, a startup doesn’t have any production footprint at all and a small business does. To a first order, pf(SU)=0 and pf(SB)>0. A startup doesn’t know with any precision how it’s going to make stuff. A small business does. Whether it’s a dry cleaner, law office, or liquor distributor, the founders know pretty precisely what they’re going to do and how they’re going to do it. However, startups have a much larger search radius than small businesses. r(SU)>r(SB). Assuming that we can define a search area on a set of production functions the startup could currently implement (but hasn’t yet), I content that also sa(SU)>sa(SB).

This realization was an epiphany for me. Even though the average person thinks of startups and small businesses as similar, they are actually polar opposites. They may both have a few employees working in a small office, but one is widely focused on exploring a huge region of PFS while the other is narrowly focused on implementing production functions within a tiny region of PFS. I also realized that you need to evaluate two things in a startup: (a) its ability to search PFS and (b) the ability to implement a production function once it locates a promising region of PFS. But the magnitude of impact for (a) is at least as big as (b) in the very early stages.

Now on to the issue of large companies. The problem here is search costs. Remember that, in three-dimensional space, volume increases as the cube of distance. In PFS, volume increase as an exponent of distance equal to the dimensionality of PFS. I posit that PFS is high-dimension, so this volume increases very quickly indeed. Now try to visualize the production footprint and search area of a large company. A large company has a lot of production functions in play so pf(LC) is large. But sa(r,LC) increases exponentially from this large volume by a large exponent.

In three dimensions, imagine that pf(LC) is like a hot air balloon. Extending just 10 feet out from the hot air balloon’s surface encompasses a huge volume of additional air. But in high-dimension PFS, the effect is… well… exponentially greater. So a large company has a problem. On the one hand, increasing its search radius is enormously costly. On the other hand, we know that Black Swan shifts in the fabric of PFS will occasionally render a huge volume dramatically less profitable, probably killing companies limited to that volume. So it’s only a matter of time before sa(r,LC) is hit by one of these shifts, for any value of r.

Obviously, there will be some equilibrium value of r where the cost balances the risk, but that also implies there’s an equilibrium value for the expected time-to-live of large companies. Yikes! This explains why the average life expectancy of a Fortune 500 company is measured in decades. Another epiphany.

Internal venture groups and incubators represent a hack that attempts to circumvent this cold, hard calculation. The problem is that it’s difficult to explore a region of PFS without actually trying to implement a production function in that region. Sure, paper analysis and small experiments buy you some visibility, but not very much. Also, in most cases, you don’t get very good information from other firms on their explorations of PFS, unless you observe a massive success or failure, at which point it’s too late to do much about your position. That’s why search costs are so darn high. Enter corporate venture groups and startup incubators.

These initiatives require some capital investment by the large company. But this investment is then multiplied by the monetary capital of other investors as well as the human capital of the entrepreneurs. With careful management, a large company can get almost as much insight into explorations of PFS by these startups as it would from its own direct efforts. Moreover, because startups are willing to explore PFS farther from existing production footprints, the large company actually gets better search coverage of PFS.

This framework answers a key question about such initiatives. To what extent should corporate venture groups and startup incubators restrict the startups they back to those with a “strategic” fit”? If you believe in my PFS hypothesis, the answer is close to zero or perhaps even less than zero (look for startups in areas outside your company’s area of expertise). Otherwise, they’re biasing their searches to the region of PFS that’s close to the region they’re already searching. It doesn’t increase the ability to survive that much. As far as I know, Google is the only company that adopts this approach. I think they’re right and now I think I understand why. Epiphany number three.

* There are some mathematical details here that need to be fleshed out to define this surface. But I don’t think they add to the discussion and my topology is really rusty.

** More mathematical details omitted. The only important one is that the extension outward doesn’t have to be by a constant radius. It can be a function of the point on the pf. In that case, r is a global scaling parameter for the function.

Thoughts on the Theory of the Firm

One of the interesting questions in economics is why markets coordinate some forms of production but firms coordinate others. Or to put it more sharply, if centralized economic planning doesn’t work for countries, why does it work for firms?

In principle, it should be possible to coordinate production solely through market transactions among individuals. Everyone would be some combination of an independent contractor and a capital owner. The challenge of course would be establishing the necessarily fluid markets, not to mention all the time everyone would have to spend negotiating contracts for their labor and capital.

Thus the standard approach to explaining firms starts with the Transaction Cost theory (typically attributed to Coase). Whenever the cost of a market-based mechanism is higher than a firm-based mechanism, a firm will end up coordinating production. Of course, to anyone who has ever started a new firm or worked any length of time at a large one, this explanation is not terribly satisfying. How do you know the relative costs in the first place and then explain the apparently wasted resources at large companies? Newer theories have tried to do better, but none seem to tell the whole story. See here for an overview of the literature.

Another unsatisfying aspect of mainstream Theories of the Firm is that they don’t explain the observed interactions of firms with the macroeconomy very well. For example, firms don’t responsively lower existing salaries when the demand for labor goes down or the supply goes up. Firms also seem to forego internal price-based mechanisms that would allow them to respond more flexibly to macro shifts in cost and demand. Even more puzzling, there’s no good explanation of why and when some large firms grow dramatically while others die off. I’ve seen some attempts to address particular questions, but they seem like a patchwork rather than a coherent framework.

The always insightful Arnold Kling refers to a tweet from fellow GMU economist Garett Jones as one possible explanation: “Workers mostly build organizational capital, not final output”. Unfortunately, this is a tweet, not a theory. I was sort of playing around with the idea to see if I could get a decent theory out of it and I think I may have something. It ties together microeconomics, entrepreneurship, search theory, options theory, principal-agent theory, and group dynamics.

The basic idea is: firms don’t produce products; firms produce production functions. It seems obvious in retrospect, but a cursory search of the literature didn’t turn up anything similar. Someone has probably thought of this before. But perhaps I got lucky. So I’ll run with the ball for now.

What Is a Production Function?

In economics, a production function is an abstract model of how an economic actor turns inputs into outputs. Basically, it represents the formula or a recipe for a product. Typically, we write Q=f(X), where Q is a vector of output quantities, f is the production function, and X is a vector of input quantities. While “real” production functions have very specific outputs and inputs, economists often simplify the world by assuming each actor only produces one output, Goods, and there are a standard set of general inputs such as Labor, Capital, and Land. If we know the cost curves of Labor, Capital, and Land, we can calculate a cost curve for Goods and examine the tradeoffs and synergies among Labor, Capital, and Land.

Typically, economic theory defines a firm in terms of its production function. However, anyone who has ever founded a startup or worked in a large company should find this definition puzzling. When I’ve started companies, I could not even clearly define what our inputs and outputs would be, let alone the formula for turning the former into the latter. When I’ve interacted with big companies, I typically see a lot of people and groups who have nothing to do with turning inputs into outputs. For example, CTO, CIO, CFO, Corporate Development, Business Development, Product Management, Brand Management, and Market Research. In fact, when I think about the technology industry, the fraction of people actually involved in transforming of inputs to outputs, even if you count the relevant management hierarchy, seems pretty small.

So what is it that entrepreneurs and most of the of the people at large companies are actually doing? My hypothesis is that they are exploring alternative production functions: trying to figure out ways to improve existing businesses and explore opportunities for completely new businesses. Intuitively, this seems reasonable given my experience, but I’d never thought of how to formalize the concept.

Now some production functions are conceptually “near” current ones in that they represent small refinements to the manufacturing process or modest enhancement to existing products. Other production function are conceptually “far” from current ones in that they introduce radical new manufacturing technologies or generate revolutionary new products. I bet if you think about the people you’ve worked with, most of them are involved in figuring out how to produce things or what things to produce, rather than actually producing things. So they are producing production functions.

Production Function Space

The cool way to approach this kind of search problem is to posit a high-dimension space of all the alternatives with a structure that allows us to define the concepts of “near” and “far”. In this case we have production function space.

The challenge is that most points in this space are not economically viable. Some of them define products that nobody wants (e.g., Apple Newtons). Some of them define products that we can’t make at our current level of technology (e.g., flying cars). Some of them define products that we could make but whose demand curve never intersects its cost curve (e.g., diamond coated toothpicks).

Moreover, there are a lot of dependencies among points in production function space. So you can’t consider just one point in isolation; you have to consider configurations of points. Some Goods in one production function are Capital in another production function (e.g., Intel processors). In other cases, demand for some Goods exists only if there is also demand for other Goods (e.g., third party iPhone cases and Apple iPhones).

Most importantly, the goal is not just to find economically viable points. The goal is to find points that generate a lot of profit. Given that changes in technology and fashion cause these points to constantly shift relative to each other and the high dimensionality of the relationships among points, we have a rather complex optimization problem. I think of it as the potfolio optimization problem from finance, combined with the n-body problem from physics, combined with the protein folding problem from biology.

Given this level of complexity, we would expect that search strategies almost always use heuristics and trial-and-error rather than purely analytic optimization.

The Implications

In subsequent posts, I plan to analyze this hypothesis from several different angles. I also hope to come up with some predictions that someone could test. But I thought it would be useful to throw out some gross speculation right now to show how this insight crystallized my thinking and pique your interest in exploring further:

– The value of a firm is the present value of the profit stream from actual current production functions plus the option value of potential future production functions.

– Large firms tend to explore neighborhoods of production function space relatively near the surfaces defined by their current products.

– Startups tend to explore neighborhoods of production function space relatively distant from the surfaces defined by everyone else’s current products.

– Firms that have released successful new products are more valuable because this success implies greater skill in searching production functions space.

– Firms that have released successful new products are also more valuable because they then have a larger beachhead from which to explore greater regions of production function space in the future. Luck counts.

– Large firms acquire startups in part to increase their ability to search more distant regions of production function space.

– Due to network effects among colleagues and the uniqueness of each firm’s endowments, a given firm’s ability to search production function space is proportional to the number of employees it has and their length of service.

– It is harder to observe the true contribution of a particular employee to searching production function space than it is to observe the true contributions of an employee to a specific production function. Therefore, the principal-agent problem is worse than we think.

– Because the ability to search production function space increases with the number of people involved, “empire building” is a rational strategy for an employee to increase both his apparent and his actual value to the firm.